How to Implement AI Risk Management Strategies

Organizations worldwide are racing to implement artificial intelligence, but many overlook a critical component: comprehensive risk management. As AI systems become more sophisticated and widespread, the potential for unintended consequences grows exponentially. Smart enterprises recognize that successful AI adoption requires more than just deploying models—it demands a structured approach to identifying, assessing, and mitigating AI-related risks.

This guide provides actionable strategies for implementing robust AI risk management frameworks that protect your organization while enabling innovation. You'll learn how to build governance structures, establish monitoring systems, and ensure compliance with emerging regulations.

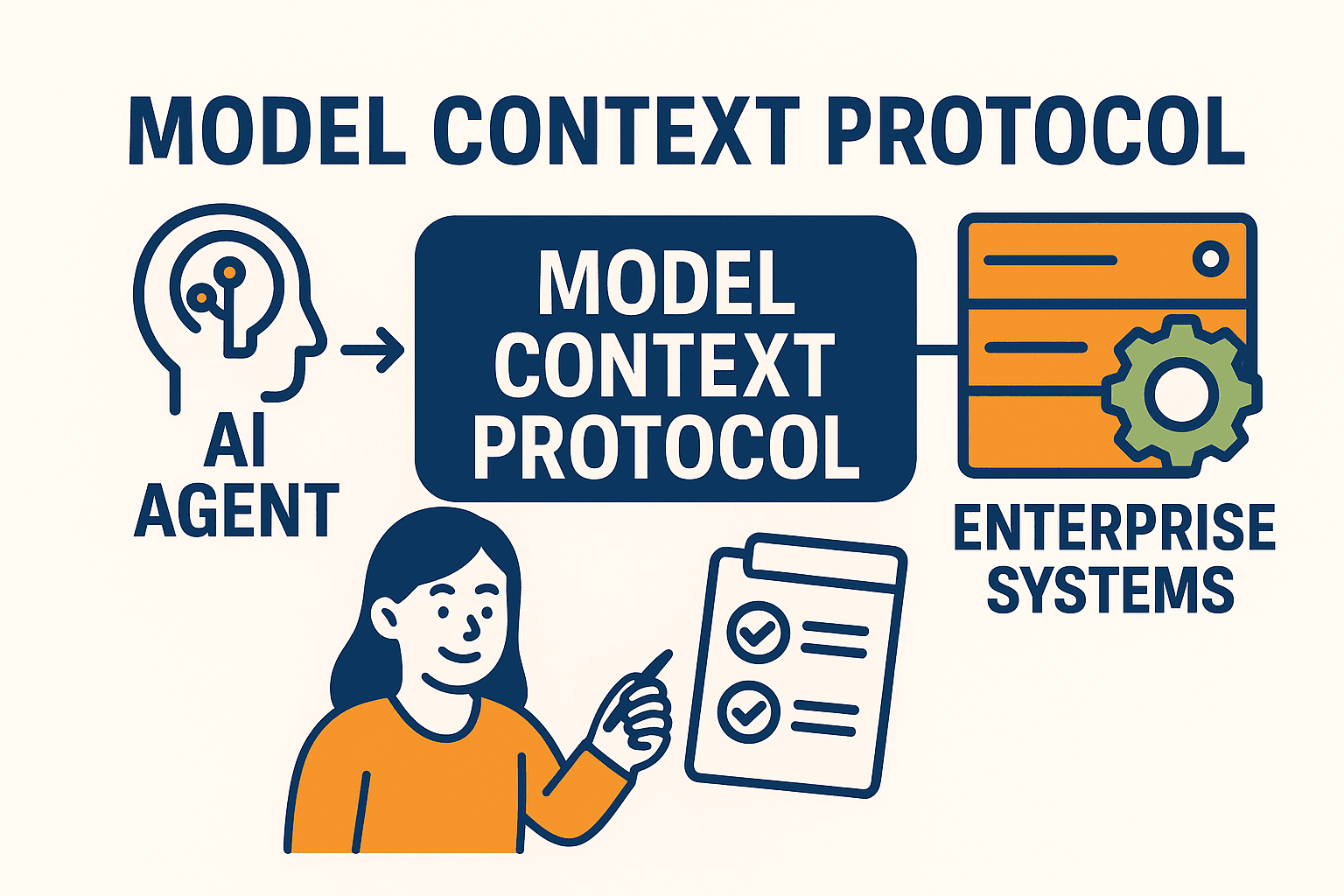

AI risk management encompasses the systematic identification, assessment, and mitigation of potential negative outcomes from artificial intelligence systems. This discipline combines traditional risk management principles with AI-specific considerations like algorithmic bias, model drift, and explainability requirements.

The business case for proactive artificial intelligence governance is compelling. Organizations that implement comprehensive AI risk frameworks report 40% fewer AI-related incidents and significantly lower remediation costs. Consider that a single biased algorithm can result in millions in legal settlements, regulatory fines, and reputation damage.

Recent industry data reveals that 85% of AI projects fail to deliver expected business value, often due to inadequate risk management. The cost of prevention—typically 2-5% of total AI investment—pales in comparison to the potential losses from AI failures, which can reach millions of dollars in direct costs alone.

Algorithmic bias represents one of the most pervasive AI risks. Training data often contains historical prejudices that AI systems learn and amplify. For example, hiring algorithms have been found to discriminate against women and minorities, while facial recognition systems show higher error rates for certain demographic groups.

These biases emerge from multiple sources: incomplete datasets, historical discrimination in training data, and inadequate testing across diverse populations. The business impact extends beyond legal liability to include damaged customer relationships and regulatory scrutiny.

AI security presents unique challenges beyond traditional cybersecurity. Adversarial attacks can manipulate AI models by introducing carefully crafted inputs that cause incorrect outputs. Model poisoning attacks corrupt training data, while data extraction attacks can reveal sensitive information from AI systems.

These vulnerabilities require specialized security measures that traditional IT security frameworks don't address. Organizations must implement AI-specific security controls alongside conventional cybersecurity practices.

AI systems often process vast amounts of personal data, creating significant privacy risks. Regulations like GDPR and CCPA impose strict requirements on data processing, while emerging AI-specific regulations add additional compliance obligations.

Key privacy challenges include data minimization, consent management, and the right to explanation. Organizations must balance AI performance with privacy protection, often requiring innovative technical solutions.

The "black box" nature of many AI systems creates accountability challenges. When AI systems make decisions that affect individuals or business outcomes, stakeholders need to understand the reasoning behind those decisions. Explainable AI becomes crucial for regulatory compliance, customer trust, and internal governance.

Expert Insight

Organizations that invest in explainable AI from the start reduce compliance costs by up to 60% compared to those that retrofit transparency features later. Building interpretability into AI systems during development is far more cost-effective than adding it afterward.

Begin by creating a comprehensive inventory of all AI systems within your organization. Classify each system based on risk level, considering factors like decision impact, data sensitivity, and regulatory requirements. Develop risk scoring matrices that account for both likelihood and potential impact of AI-related incidents.

Form cross-functional teams that include data scientists, legal experts, compliance officers, and business stakeholders. This diverse perspective ensures comprehensive risk identification and assessment.

Establish AI oversight committees with clear authority and accountability. These committees should include senior leadership and subject matter experts who can make binding decisions about AI risk tolerance and mitigation strategies.

Create detailed AI use policies that define acceptable applications, prohibited uses, and approval processes. These policies should address ai ethics considerations, data handling requirements, and performance standards.

Implement robust model validation and testing protocols that include bias testing, performance monitoring, and security assessments. Establish continuous monitoring systems that track model performance, data drift, and potential security threats.

Develop incident response procedures specifically for AI-related issues. These procedures should address model failures, bias incidents, security breaches, and regulatory violations.

The NIST AI Risk Management Framework (AI RMF 1.0) provides a structured approach to responsible ai development and deployment. The framework organizes AI risk management into four core functions: Govern, Map, Measure, and Manage.

The Govern function establishes organizational culture and leadership commitment to AI risk management. Map identifies and categorizes AI risks specific to your context. Measure involves analyzing and tracking identified risks. Manage implements appropriate responses to identified risks.

Organizations can adapt NIST guidelines based on their size and complexity. Smaller organizations might implement simplified versions of the framework, while large enterprises may need more comprehensive approaches. The key is ensuring the framework integrates with existing risk management processes rather than creating parallel systems.

The regulatory landscape for AI continues evolving rapidly. The EU AI Act establishes comprehensive requirements for high-risk AI systems, while US executive orders mandate federal agency compliance with AI safety standards. Industry-specific regulations in healthcare, finance, and other sectors add additional compliance layers.

Successful ai compliance requires robust documentation and audit trails. Organizations must maintain records of AI system development, testing, deployment, and monitoring activities. This documentation proves essential during regulatory audits and incident investigations.

Third-party AI vendor risk assessment becomes crucial as organizations increasingly rely on external AI services. Establish vendor evaluation criteria that address security, bias, explainability, and compliance requirements.

Effective ai auditing requires measurable indicators of risk management performance. Track metrics like bias detection rates, security incident frequency, compliance violation counts, and stakeholder satisfaction scores. These metrics provide objective evidence of program effectiveness and areas for improvement.

Create dashboards that provide real-time visibility into AI risk status across the organization. These dashboards should be accessible to relevant stakeholders and support data-driven decision making.

Implement regular internal audit procedures that assess AI risk management effectiveness. These audits should evaluate policy compliance, control effectiveness, and program maturity. Consider engaging external validators for independent assessments and certification.

Integrate stakeholder feedback into continuous improvement processes. Regular surveys of AI system users, customers, and other stakeholders provide valuable insights into risk management effectiveness and areas for enhancement.

Essential components include governance structures, risk assessment methodologies, technical controls, monitoring systems, incident response procedures, and compliance management processes. These components work together to provide comprehensive AI risk coverage.

High-risk AI systems should undergo quarterly audits, while lower-risk systems may require annual assessments. Continuous monitoring should supplement formal audits to detect emerging risks promptly.

Implementation costs typically range from 2-5% of total AI investment, depending on system complexity and risk levels. This investment pays dividends through reduced incident costs and improved regulatory compliance.

Start by mapping AI risks to existing risk categories and leveraging current governance structures. Extend existing policies and procedures to address AI-specific considerations rather than creating entirely separate frameworks.

Teams should combine technical AI expertise with risk management experience, legal knowledge, and business acumen. Consider pursuing relevant certifications and training programs to build necessary capabilities.

Implementing comprehensive AI risk management strategies requires commitment, resources, and expertise, but the investment protects your organization while enabling confident AI adoption. Start with a clear assessment of your current AI landscape, establish governance frameworks, and build monitoring capabilities that evolve with your AI initiatives. Remember that effective AI risk management is not a one-time project but an ongoing capability that grows with your organization's AI maturity. Consider exploring integrated platforms that simplify AI risk management while maintaining the security and control your enterprise demands.