Hybrid AI Deployment: Strategic Enterprise Insights

Enterprise leaders face a critical decision when implementing artificial intelligence: where to deploy AI models and how to balance security, performance, and scalability. The answer increasingly lies in hybrid AI deployment strategies that combine the best of on-premise control with cloud flexibility.

This comprehensive guide explores how modern enterprises can design and implement hybrid AI deployment architectures that drive innovation while maintaining operational control. You'll discover proven strategies for building resilient AI infrastructure that adapts to your organization's unique requirements.

Hybrid AI deployment represents a strategic approach that distributes AI workloads across multiple environments. This architecture enables enterprises to place sensitive data processing on-premise while leveraging cloud resources for scalable compute power.

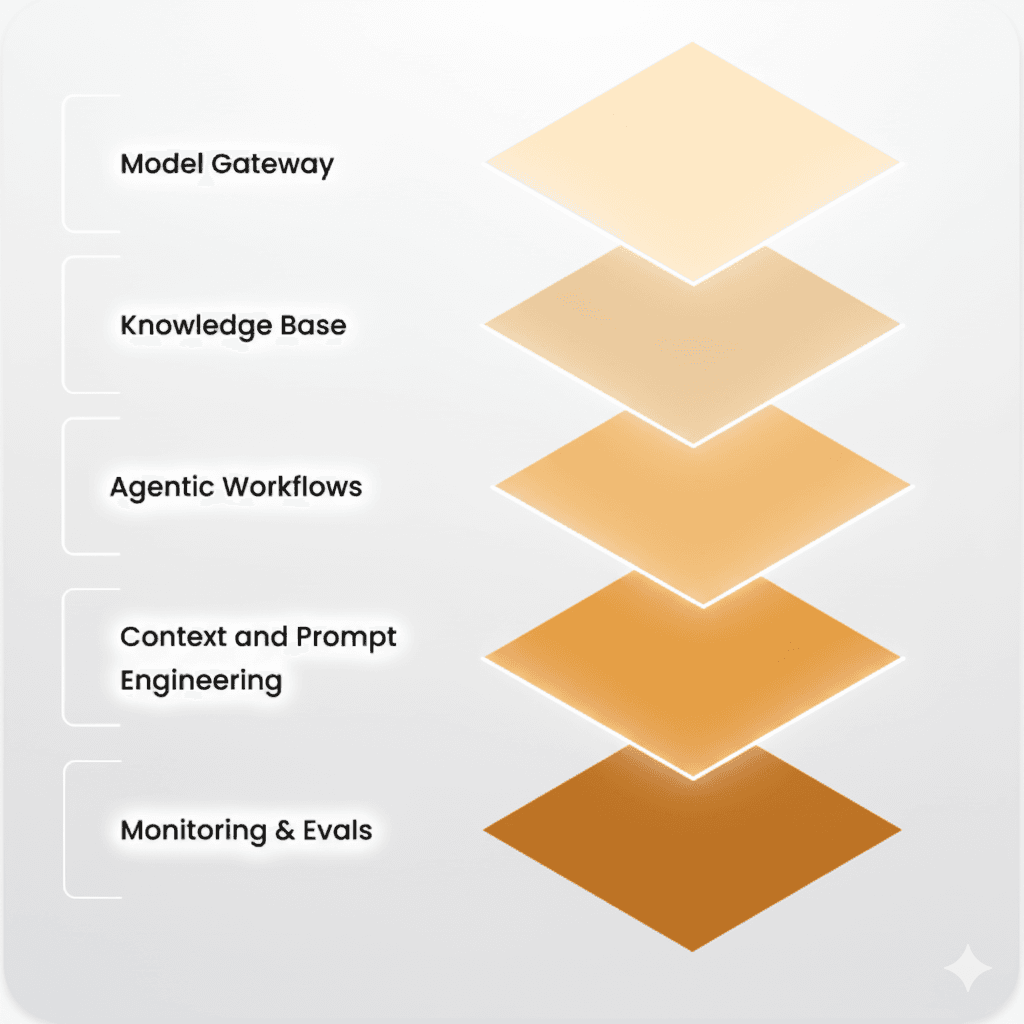

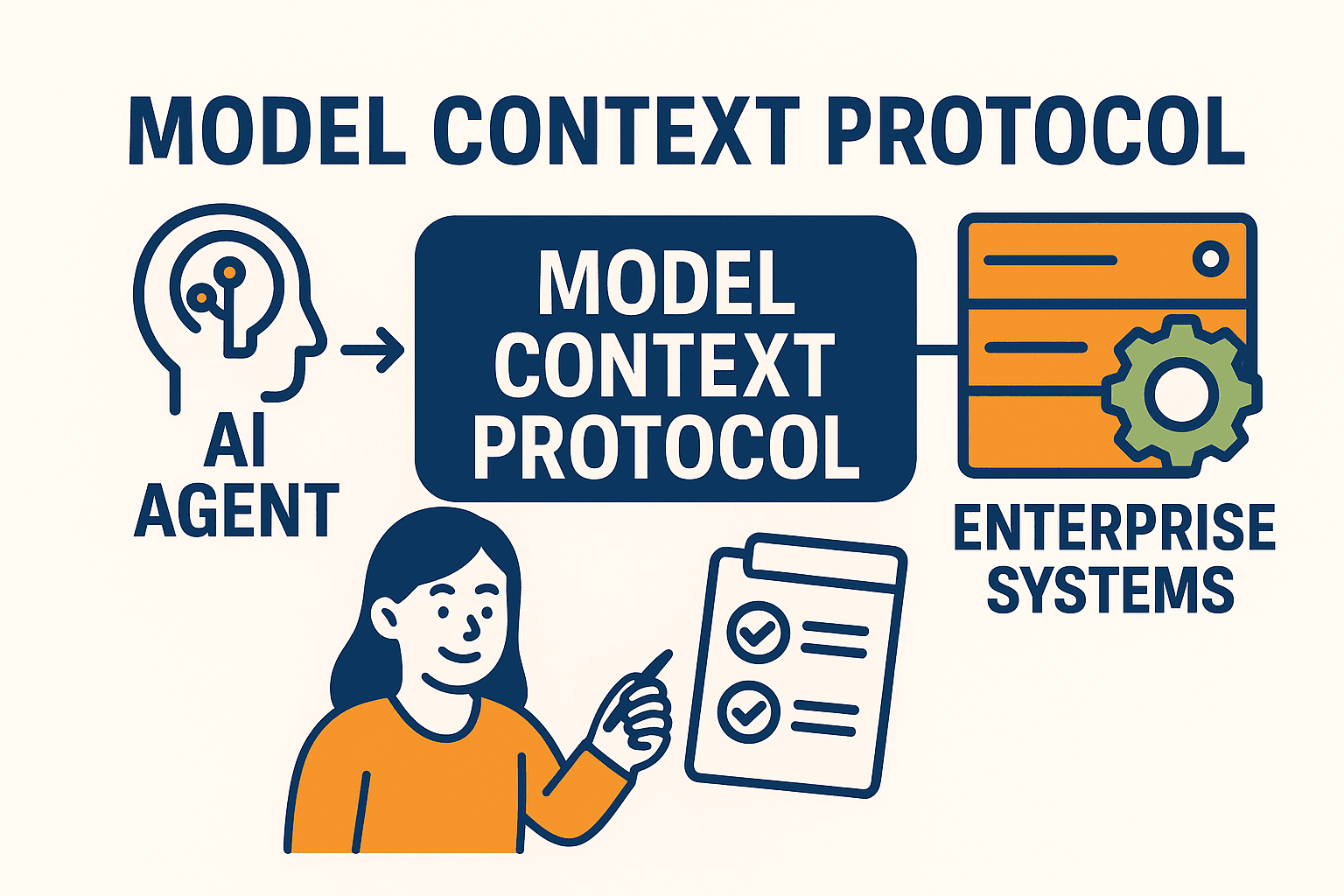

A robust hybrid AI deployment architecture consists of several interconnected components. On-premise infrastructure typically handles data preprocessing, model training on sensitive datasets, and real-time inference for critical applications. These systems require dedicated hardware, storage solutions, and network connectivity designed for AI workloads.

Cloud AI services complement on-premise capabilities by providing elastic compute resources, pre-trained models, and managed services. Integration points between these environments enable seamless data flow and model synchronization while maintaining security boundaries.

Edge computing adds another layer to hybrid AI deployment, bringing inference capabilities closer to data sources. This reduces latency for time-sensitive applications and minimizes bandwidth requirements for data transmission.

Each deployment model offers distinct advantages. Pure cloud solutions provide unlimited scalability and reduced infrastructure management overhead. However, they may introduce data sovereignty concerns and ongoing operational costs that escalate with usage.

On-premise deployments offer maximum control over data and infrastructure but require significant upfront investment and specialized expertise. Hybrid approaches balance these trade-offs by allowing organizations to optimize placement based on specific workload requirements.

Expert Insight

Organizations implementing hybrid AI deployment report 40% better cost optimization compared to pure cloud solutions, while maintaining 99.9% uptime for critical AI applications through strategic workload distribution.

Successful AI implementation requires a methodical approach that aligns technology capabilities with business objectives. Enterprise AI solutions must address both immediate operational needs and long-term strategic goals.

Begin with a comprehensive evaluation of your current infrastructure capabilities. Assess network bandwidth, compute resources, storage capacity, and security controls. Map these technical capabilities against your AI use cases to identify gaps and opportunities.

Business requirement mapping involves understanding data sensitivity levels, performance requirements, compliance obligations, and budget constraints. This analysis guides decisions about workload placement and resource allocation across hybrid environments.

Risk assessment methodologies help identify potential challenges early in the planning process. Consider factors like data privacy regulations, vendor dependencies, integration complexity, and skills availability when designing your AI deployment strategy.

Start with pilot programs that demonstrate value while minimizing risk. Select use cases with clear success metrics and manageable scope. This approach allows teams to gain experience with hybrid AI deployment before scaling to production environments.

Gradual scaling strategies ensure smooth transitions from proof-of-concept to production deployment. Establish clear criteria for moving between phases and maintain flexibility to adjust based on lessons learned.

Designing effective hybrid cloud AI infrastructure requires careful consideration of data flow patterns, security requirements, and performance objectives. The architecture must support both current needs and future growth.

Multi-cloud deployment models provide flexibility and reduce vendor lock-in risks. Distribute workloads across providers based on their strengths: use one cloud for training large models while leveraging another for real-time inference.

Data residency and sovereignty requirements often dictate where certain workloads can operate. Design your architecture to ensure compliance with local regulations while maintaining operational efficiency.

Network connectivity between environments requires robust, secure connections with sufficient bandwidth for data synchronization and model updates. Implement redundant connections to ensure high availability.

Load balancing strategies distribute AI workloads efficiently across available resources. Implement intelligent routing that considers factors like model complexity, data location, and resource availability.

Auto-scaling mechanisms respond to changing demand patterns automatically. Configure scaling policies that account for AI workload characteristics, including model initialization time and resource requirements.

On-premise AI components require robust security frameworks that protect sensitive data while enabling collaboration with cloud resources. Security must be built into every layer of the architecture.

Data protection protocols ensure information remains secure throughout the AI pipeline. Implement encryption for data at rest and in transit, with key management systems that maintain separation between environments.

Access control mechanisms enforce the principle of least privilege across hybrid environments. Use identity and access management systems that provide consistent policy enforcement regardless of workload location.

Audit trail implementation enables comprehensive monitoring and compliance reporting. Track data access, model updates, and system changes across all components of your hybrid AI deployment.

API gateway implementation provides secure, managed access to existing systems. Design APIs that abstract underlying complexity while maintaining performance and security requirements.

Data pipeline modernization ensures efficient flow between legacy systems and modern AI infrastructure. Implement real-time and batch processing capabilities that support various data formats and sources.

Effective AI model deployment requires robust governance frameworks that ensure quality, security, and compliance throughout the model lifecycle.

Version control strategies track model changes and enable rollback capabilities. Implement automated testing and validation processes that verify model performance before deployment.

A/B testing frameworks enable safe deployment of model updates. Compare new models against existing versions using real-world data while minimizing risk to production systems.

Performance monitoring provides continuous visibility into model behavior. Track accuracy metrics, response times, and resource utilization to identify issues before they impact users.

AI ethics implementation ensures responsible use of artificial intelligence. Establish guidelines for bias detection, fairness assessment, and transparent decision-making processes.

Model explainability requirements vary by industry and use case. Implement tools and processes that provide appropriate levels of transparency for stakeholders and regulators.

Technology evolves rapidly, and your hybrid AI deployment must adapt to emerging capabilities and changing business requirements.

Edge AI capabilities bring intelligence closer to data sources, reducing latency and bandwidth requirements. Plan for edge deployment scenarios that complement your hybrid architecture.

Consider how emerging technologies like quantum computing and 5G networks might impact your AI deployment strategy. Design flexible architectures that can incorporate new capabilities as they mature.

Technology roadmap development helps align AI investments with business strategy. Regular assessment of emerging technologies and vendor capabilities ensures your deployment remains competitive.

Continuous improvement frameworks enable ongoing optimization of your hybrid AI deployment. Establish metrics and processes for measuring success and identifying enhancement opportunities.

Hybrid AI deployment combines on-premise and cloud-based AI infrastructure to balance security, performance, and scalability while maintaining data control and regulatory compliance.

Consider factors like data sensitivity, regulatory requirements, existing infrastructure, budget constraints, and performance needs to determine the optimal deployment strategy.

Key security considerations include data encryption, access controls, network security, compliance requirements, and maintaining consistent security policies across environments.

Implementation timelines vary from 6-18 months depending on complexity, existing infrastructure, organizational readiness, and scope of deployment.

Hybrid deployments often have higher initial costs but can provide long-term savings through optimized resource utilization and reduced data transfer costs.

Hybrid AI deployment represents the future of enterprise artificial intelligence, offering the flexibility to optimize workload placement while maintaining security and control. Success requires careful planning, robust architecture design, and ongoing optimization based on evolving business needs.

Organizations that embrace hybrid AI deployment strategies position themselves to leverage the full potential of artificial intelligence while maintaining operational control and cost efficiency. The key lies in understanding your unique requirements and designing solutions that balance innovation with practical constraints.