Breaking Free: AI Strategies for Vendor Lock-In

Enterprise leaders face a critical decision point in their AI journey. While the promise of artificial intelligence drives innovation forward, the risk of vendor lock-in threatens long-term strategic flexibility. Organizations that rush into AI adoption without considering vendor independence often find themselves trapped in costly, restrictive ecosystems that limit their ability to adapt and scale.

This challenge becomes more complex as AI technologies evolve rapidly. The decisions you make today about AI platforms and providers will shape your organization's technological freedom for years to come. Understanding how to leverage ai solutions for avoiding vendor lock-in empowers enterprises to harness AI's transformative power while maintaining control over their technological destiny.

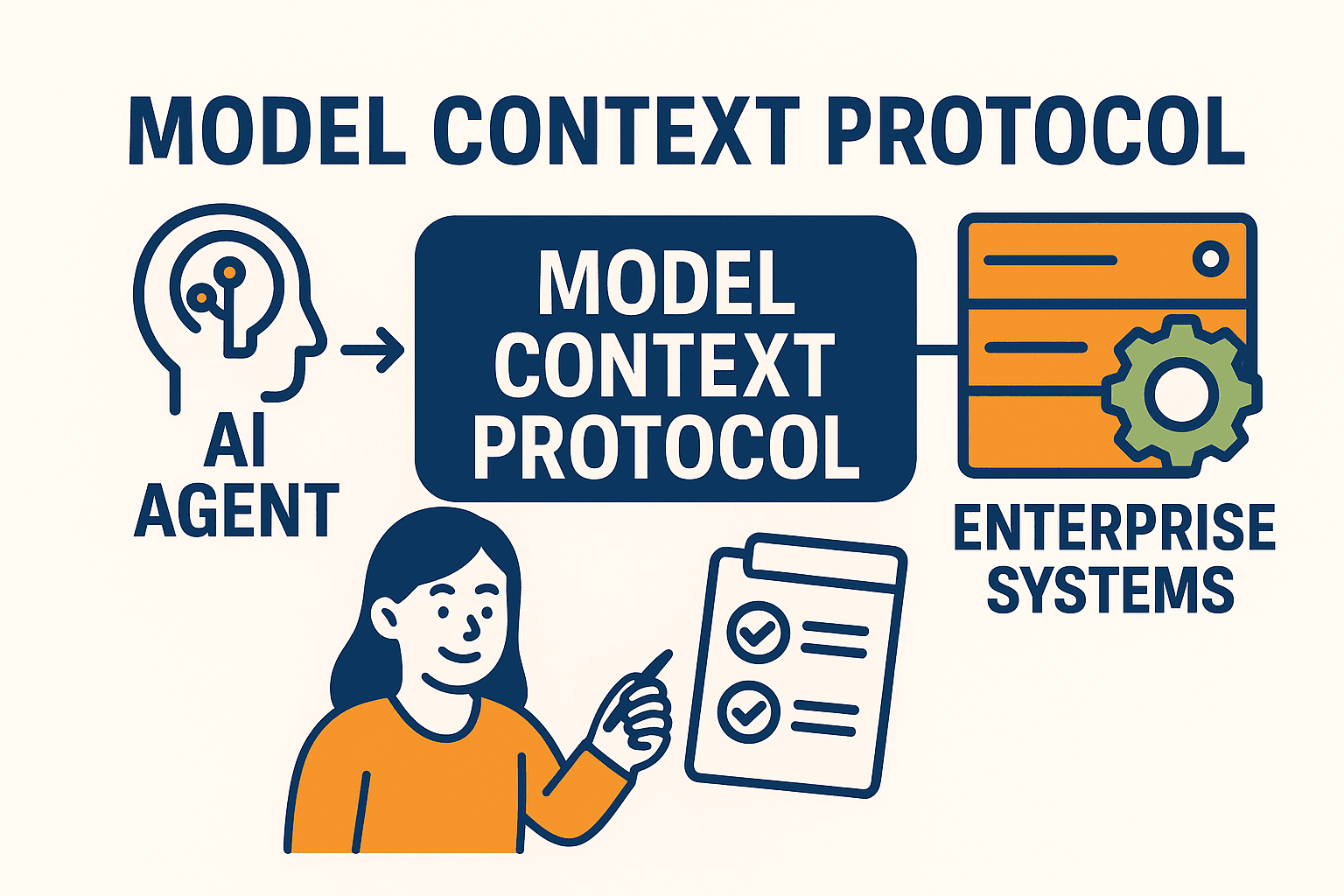

AI vendor lock-in occurs when organizations become dependent on a specific provider's proprietary technologies, making it difficult or costly to switch to alternative solutions. Unlike traditional software dependencies, AI vendor lock-in presents unique challenges that can severely impact business agility.

The complexity of AI systems creates multiple layers of dependency. Your organization might rely on proprietary data formats, specialized APIs, custom model architectures, or integrated toolchains that work exclusively within one vendor's ecosystem. These dependencies compound over time, making migration increasingly difficult and expensive.

AI vendor lock-in differs significantly from traditional software dependencies. Machine learning models require extensive training data, specialized infrastructure, and continuous optimization. When these components become tied to a single vendor, organizations face several critical risks:

Data becomes trapped in proprietary formats that resist easy export. Model architectures rely on vendor-specific frameworks that cannot transfer to other platforms. Integration patterns lock organizations into specific cloud services and APIs. Training pipelines depend on proprietary tools that create operational dependencies.

The financial impact grows exponentially as AI initiatives scale. Organizations often start with attractive introductory pricing, only to discover escalating costs as their usage increases. Without alternatives, enterprises have limited negotiating power and face unpredictable pricing changes.

Forward-thinking organizations leverage AI itself to identify and prevent vendor dependencies before they become problematic. Modern ai solutions for avoiding vendor lock-in provide sophisticated tools for risk assessment and dependency management.

Automated contract analysis tools scan vendor agreements to identify restrictive clauses and potential lock-in mechanisms. These systems flag concerning terms like exclusive data usage rights, proprietary format requirements, or restrictive migration policies. Machine learning algorithms analyze contract language patterns to predict future dependency risks.

AI-powered dependency mapping creates visual representations of your technology stack, highlighting potential single points of failure. These tools continuously monitor your infrastructure to identify growing dependencies and suggest alternative architectures that maintain flexibility.

Compliance monitoring systems automatically track adherence to open standards compliance across your AI implementations. When systems drift toward proprietary solutions, these tools alert stakeholders and recommend corrective actions to maintain vendor independence.

Expert Insight

Organizations that implement vendor lock-in prevention strategies from the beginning reduce their total cost of AI ownership by up to 40% over five years, while maintaining the flexibility to adopt emerging technologies as they mature.

The open source ecosystem provides robust alternatives to proprietary AI platforms, enabling organizations to build flexible IT infrastructure without vendor dependencies. These solutions offer transparency, community support, and the freedom to modify systems according to specific business needs.

Leading open source alternatives include frameworks like TensorFlow, PyTorch, and Apache Spark for machine learning development. Container orchestration platforms such as Kubernetes provide vendor-neutral deployment environments that work across multiple cloud providers.

Open source MLOps platforms enable organizations to create end-to-end AI workflows without proprietary dependencies. Tools like MLflow, Kubeflow, and Apache Airflow provide comprehensive capabilities for model development, deployment, and monitoring across diverse environments.

Community-driven model repositories offer pre-trained models and architectures that work across different platforms. These resources reduce development time while maintaining the flexibility to deploy models wherever business needs dictate.

Multi-cloud solutions distribute AI workloads across multiple providers, reducing dependency on any single vendor while optimizing performance and costs. This approach requires careful architecture planning but delivers significant strategic advantages.

Effective cloud migration strategies for AI workloads involve designing systems with portability in mind from the beginning. Container-based deployments, standardized APIs, and cloud-agnostic data formats enable seamless movement between providers as business needs evolve.

Modern AI architectures leverage Kubernetes orchestration to abstract away cloud-specific services. This approach enables organizations to deploy identical AI workloads across different cloud providers without modification, maintaining consistency while avoiding platform dependency.

Load balancing strategies distribute AI inference requests across multiple cloud providers, ensuring high availability while preventing over-reliance on single platforms. This approach also enables cost optimization by routing workloads to the most cost-effective provider for specific tasks.

Data portability forms the foundation of vendor independence in AI systems. Organizations must ensure that training data, model artifacts, and operational metrics remain accessible and transferable regardless of the underlying platform.

Standardized data formats like Apache Parquet, JSON, and CSV ensure that AI training datasets remain vendor-neutral. Model serialization standards such as ONNX (Open Neural Network Exchange) enable trained models to run across different frameworks and platforms without modification.

Interoperability solutions focus on creating consistent interfaces across different AI platforms. RESTful APIs with standardized schemas enable applications to switch between AI service providers without code changes, maintaining operational continuity during vendor transitions.

Data governance frameworks establish policies and procedures for maintaining vendor independence throughout the AI lifecycle. These frameworks define data ownership, export procedures, and migration protocols that protect organizational flexibility.

Successful implementation of avoiding platform dependency strategies requires systematic planning and ongoing vigilance. Organizations need practical frameworks for evaluating vendors, designing architectures, and monitoring dependencies over time.

Before selecting AI platforms, evaluate data export capabilities and supported formats. Assess API documentation for proprietary dependencies and integration requirements. Review licensing terms for restrictions on model portability and data usage rights.

Negotiate contract terms that include explicit data export rights, model portability guarantees, and reasonable termination procedures. Establish service level agreements that protect your ability to migrate workloads within defined timeframes.

Implement regular dependency audits to identify growing vendor relationships that might compromise flexibility. Monitor usage patterns to detect over-reliance on specific platforms or services. Establish alerts for contract changes or policy updates that might affect vendor independence.

Create migration testing procedures that validate your ability to move workloads between platforms. Regular testing ensures that theoretical portability translates into practical capability when business needs require vendor changes.

Organizations should prioritize open standards, design portable architectures, negotiate flexible contracts, and regularly assess vendor dependencies. Using container-based deployments and standardized data formats provides the foundation for vendor independence.

Warning signs include difficulty exporting training data, proprietary model formats that resist migration, escalating costs without alternatives, and integration patterns that require specific vendor services. Limited negotiating power and restricted technology choices also indicate problematic dependencies.

Multi-cloud approaches distribute workloads across multiple providers, reducing dependency on any single vendor. This strategy enables cost optimization, improves negotiating position, and provides fallback options if one provider changes terms or experiences service issues.

Open source solutions provide transparent, community-supported alternatives to proprietary platforms. They enable organizations to modify systems according to specific needs, avoid licensing restrictions, and maintain control over their technology stack evolution.

Enterprises should use standardized data formats, implement robust data governance frameworks, negotiate explicit export rights, and regularly test migration procedures. Maintaining vendor-neutral data storage and processing pipelines ensures long-term flexibility.

Breaking free from AI vendor lock-in requires strategic planning, technical expertise, and ongoing vigilance. Organizations that prioritize vendor independence from the beginning position themselves to adapt quickly to changing business needs and emerging technologies. The investment in flexible, portable AI architectures pays dividends through reduced costs, improved negotiating power, and the freedom to innovate without constraints.

As AI continues to transform business operations, maintaining control over your technological destiny becomes increasingly critical. By implementing comprehensive vendor independence strategies, enterprises can harness AI's transformative power while preserving the flexibility needed for long-term success. Consider exploring integrated platforms that prioritize vendor neutrality and provide the tools needed to maintain strategic flexibility in your AI journey.